A primary call to fix Big Tech is for state regulators to write laws and then enforce them. Seems easy enough. Let's be real: it's very much not. Because, in part, what we are regulating for is complex. We want to stop slow violence which is fuelled by monopoly power, new technologies at scale (which bring on new kinds of exploitation), and a whole host of other harms that take time to understand and in turn regulate. Furthermore, by the time we do understand them, it seems that people innovate new ways to evade and extract.

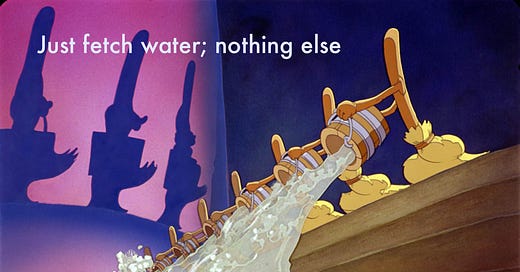

Okay, so let's assume that we have the best policies: they stop some harmful behaviours altogether and require new forms of compliance. Let's say the laws are perfect, and the compliance is thorough and meaningful. I see two problems:

we confuse a minimum standard (compliance) with a duty of care.

we make organisations orient their moral thinking to a code of fixed rules rather than a dynamic appreciation of responsibility.

If we have that perfect regulation and develop cultures of compliance to follow the regulation to the letter, what's missing? I've been thinking about the (sometimes healthy) tensions between cultures of compliances and cultures of care.

In doing this, I was drawn to a distinction that Hannah Arendt makes in the Human Condition (thank you Maria Popova for sending me there). In sum, the distinction is between cognition as a processing of information for a particular purpose that can be tested; while thinking is an exploration with no beginning or end that allows for transformation of ideas.

Compliance is an investment in cognition: external regulation will increase the technology industry's focus on compliance. Many businesses, degree programs, and positions will open up with the purpose of complying (and according to David Graeber there are similar effects in 'de-regulation' which is really more regulation); and the end result will be a zombified focus on not running afoul of the law.

Thinking is an investment in moral imagination, foresight, and critical capacity to examine and refine decision-making at the intersection of products, platforms, and people.

I worry that companies will optimise for cognition to comply with a minimum standard using a risk-based lens, instead of thinking about the shifting dynamics of responsibility, morality, and integrity. If we want to come out on the other end of our current moment with robust and moral integration of technology across society, we need more thinking. We need exploratory, speculative, sociotechnical critique if we are to successfully travel through technosocial opacity (to use Shannon Vallor's lovely phrase).

At the moment, I'm not sure that companies are truly able to go beyond just the cognition of being compliant, and into the realms of this important, critical thinking. This thinking can be done externally (e.g. via universities or civil society organisations) to set norms and influence companies from the outside — but it would be way more effective if this work was done internally. We want dynamic, multi-modal teams that make the thinking happen.

If you want to get an issue of The Relay in your inbox every other Wednesday, subscribe. And, if you liked this one, check out the last issue here.

Something for people at foundations: if you've been enjoying The Relay, you may also find my handbook on How To Fund Tech useful. It brings together a decade of my experiences advising foundations and non-profits to use technology strategically and responsibly. You can get it here.